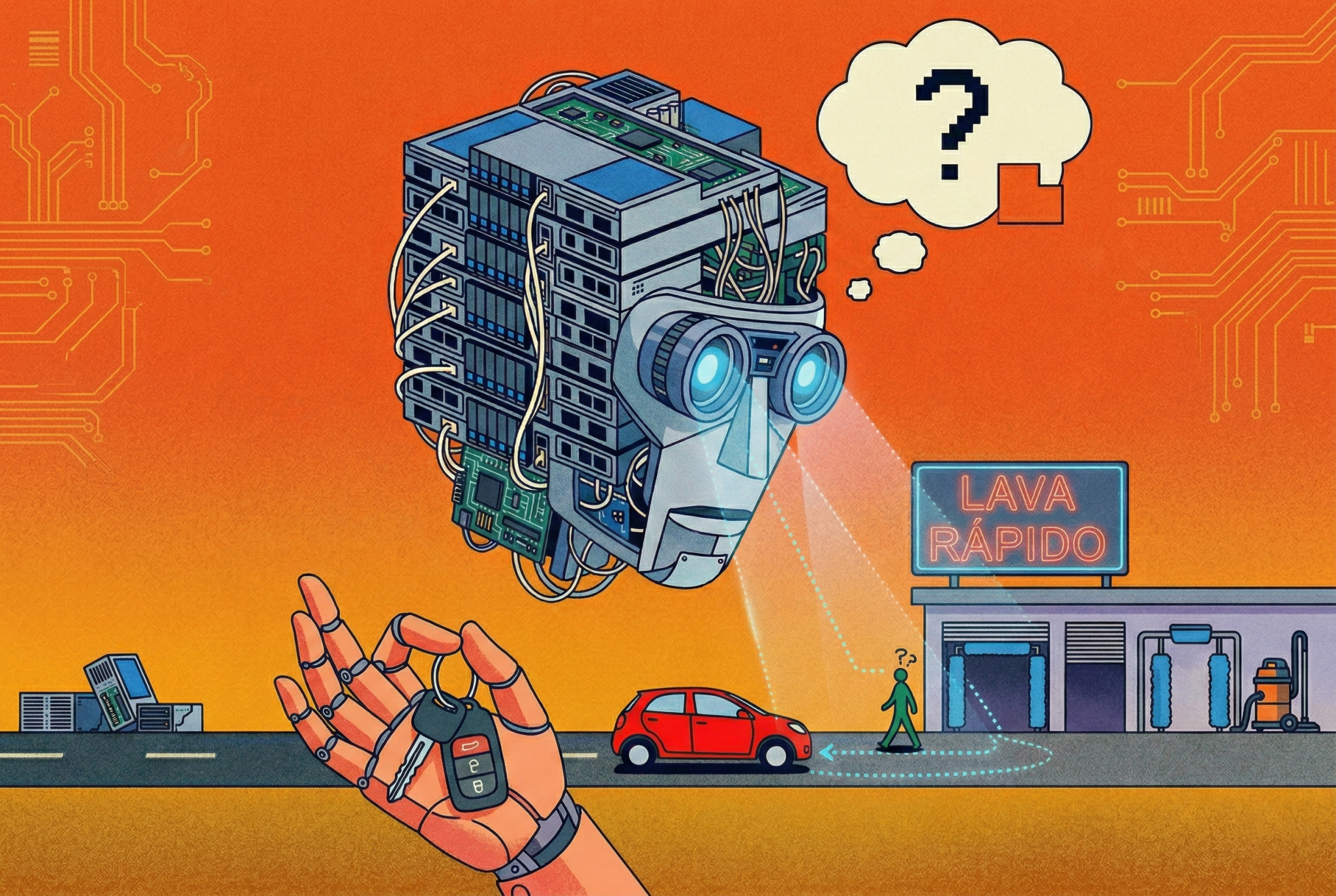

"Car Wash Dilemma" shows that AIs can make ridiculous mistakes for a human; STAR method, however, is a solution to obtain better answers

A banal question in everyday automotive life has become the new thorn in the side of artificial intelligence: “I want to wash my car. The car wash is 100 metres away. Should I walk or drive?” While any driver would readily respond that it is necessary to drive the vehicle, the main language models on the market — such as Claude, ChatGPT and Gemini — recommended walking. The reason for the failure is as simple as it is revealing: the machines fail to deduce that the car itself obviously needs to be in place to be washed.

The so-called “car wash problem” was the subject of a study conducted by independent researcher Heejin Jo, who sought to isolate the variables of this failure. The work demonstrates that AI suffers from a lack of perception of implicit physical constraints — something that humans instinctively understand. In practice, when reading “100 meters”, the systems treat the issue strictly as a distance optimization problem, justifying that the journey represents a quick walk of one to two minutes.

SEE ALSO:

To understand how to correct this reasoning, the research tested different command architectures. The result showed that just entering the physical data into the system — such as the exact model of the car and the fact that it is in the garage — results in a success rate of only 30%. The model receives the correct information, but still takes a logical shortcut straight to the wrong conclusion.

The most effective solution was not to add a larger volume of data, but rather to change the AI’s thinking structure. By applying a technique called STAR (Situation, Task, Action and Outcome), which forces the system to articulate what its real goal is (to take the car) before generating a response, the accuracy jumped from absolute zero to 85%.

The episode serves as a wake-up call for current technological application. According to the research, the system’s intelligence does not depend on the volume of information stored. In the end, the logic of the machine needs to imitate the human one: it is not enough to have the facts, it is necessary to remember to get the keys before leaving home.